Introduction

As safety professionals, we’re continually on the lookout for methods to cut back danger and enhance our workflow’s effectivity. We have made nice strides in utilizing AI to establish malicious content material, block threats, and uncover and repair vulnerabilities. We additionally printed the Safe AI Framework (SAIF), a conceptual framework for safe AI techniques to make sure we’re deploying AI in a accountable method.

As we speak we’re highlighting one other approach we use generative AI to assist the defenders acquire the benefit: Leveraging LLMs (Massive Language Mannequin) to speed-up our safety and privateness incidents workflows.

Incident administration is a crew sport. We’ve to summarize safety and privateness incidents for various audiences together with executives, leads, and companion groups. This could be a tedious and time-consuming course of that closely depends upon the goal group and the complexity of the incident. We estimate that writing a radical abstract can take almost an hour and extra complicated communications can take a number of hours. However we hypothesized that we might use generative AI to digest data a lot quicker, releasing up our incident responders to deal with different extra crucial duties – and it proved true. Utilizing generative AI we might write summaries 51% quicker whereas additionally enhancing the standard of them.

Our incident response method

When suspecting a possible knowledge incident, for instance,we comply with a rigorous course of to handle it. From the identification of the issue, the coordination of specialists and instruments, to its decision after which closure. At Google, when an incident is reported, our Detection & Response groups work to revive regular service as rapidly as potential, whereas assembly each regulatory and contractual compliance necessities. They do that by following the 5 fundamental steps within the Google incident response program:

-

Identification: Monitoring safety occasions to detect and report on potential knowledge incidents utilizing superior detection instruments, alerts, and alert mechanisms to supply early indication of potential incidents.

-

Coordination: Triaging the reviews by gathering details and assessing the severity of the incident primarily based on components comparable to potential hurt to clients, nature of the incident, kind of knowledge that is likely to be affected, and the impression of the incident on clients. A communication plan with applicable leads is then decided.

-

Decision: Gathering key details in regards to the incident comparable to root trigger and impression, and integrating extra assets as wanted to implement mandatory fixes as a part of remediation.

-

Closure: After the remediation efforts conclude, and after an information incident is resolved, reviewing the incident and response to establish key areas for enchancment.

-

Steady enchancment: Is essential for the event and upkeep of incident response applications. Groups work to enhance this system primarily based on classes realized, making certain that mandatory groups, coaching, processes, assets, and instruments are maintained.

Google’s Incident Response Course of diagram circulation

Leveraging generative AI

Our detection and response processes are crucial in defending our billions of worldwide customers from the rising risk panorama, which is why we’re repeatedly on the lookout for methods to enhance them with the newest applied sciences and methods. The expansion of generative AI has introduced with it unimaginable potential on this space, and we have been desirous to discover the way it might assist us enhance elements of the incident response course of. We began by leveraging LLMs to not solely pioneer fashionable approaches to incident response, but additionally to make sure that our processes are environment friendly and efficient at scale.

Managing incidents could be a complicated course of and a further issue is efficient inside communication to leads, executives and stakeholders on the threats and standing of incidents. Efficient communication is crucial because it correctly informs executives in order that they will take any mandatory actions, in addition to to satisfy regulatory necessities. Leveraging LLMs for the sort of communication can save vital time for the incident commanders whereas enhancing high quality on the identical time.

People vs. LLMs

On condition that LLMs have summarization capabilities, we wished to discover if they’re able to generate summaries on par, or in addition to people can. We ran an experiment that took 50 human-written summaries from native and non-native English audio system, and 50 LLM-written ones with our best (and last) immediate, and introduced them to safety groups with out revealing the writer.

We realized that the LLM-written summaries lined the entire key factors, they have been rated 10% greater than their human-written equivalents, and minimize the time essential to draft a abstract in half.

Comparability of human vs LLM content material completeness

Comparability of human vs LLM writing types

Managing dangers and defending privateness

Leveraging generative AI will not be with out dangers. As a way to mitigate the dangers round potential hallucinations and errors, any LLM generated draft should be reviewed by a human. However not all dangers are from the LLM – human misinterpretation of a truth or assertion generated by the LLM can even occur. That’s the reason it’s necessary to make sure there may be human accountability, in addition to to watch high quality and suggestions over time.

On condition that our incidents can comprise a mix of confidential, delicate, and privileged knowledge, we had to make sure we constructed an infrastructure that doesn’t retailer any knowledge. Each element of this pipeline – from the person interface to the LLM to output processing – has logging turned off. And, the LLM itself doesn’t use any enter or output for re-training. As an alternative, we use metrics and indicators to make sure it’s working correctly.

Enter processing

The kind of knowledge we course of throughout incidents might be messy and infrequently unstructured: Free-form textual content, logs, photographs, hyperlinks, impression stats, timelines, and code snippets. We would have liked to construction all of that knowledge so the LLM “knew” which a part of the data serves what function. For that, we first changed lengthy and noisy sections of codes/logs by self-closing tags ( and

Throughout immediate engineering, we refined this method and added extra tags comparable to

Pattern {incident} enter

Immediate engineering

As soon as we added construction to the enter, it was time to engineer the immediate. We began easy by exploring how LLMs can view and summarize the entire present incident details with a brief process:

Caption: First immediate model

Limits of this immediate:

-

The abstract was too lengthy, particularly for executives making an attempt to know the chance and impression of the incident

-

Some necessary details weren't lined, such because the incident’s impression and its mitigation

-

The writing was inconsistent and never following our greatest practices comparable to “passive voice”, “tense”, “terminology” or “format”

-

Some irrelevant incident knowledge was being built-in into the abstract from e mail threads

-

The mannequin struggled to know what probably the most related and up-to-date data was

For model 2, we tried a extra elaborate immediate that will tackle the issues above: We advised the mannequin to be concise and we defined what a well-written abstract needs to be: About the primary incident response steps (coordination and determination).

Second immediate model

Limits of this immediate:

-

The summaries nonetheless didn't at all times succinctly and precisely tackle the incident within the format we have been anticipating

-

At occasions, the mannequin overpassed the duty or didn't take all the rules into consideration

-

The mannequin nonetheless struggled to stay to the newest updates

-

We seen a bent to attract conclusions on hypotheses with some minor hallucinations

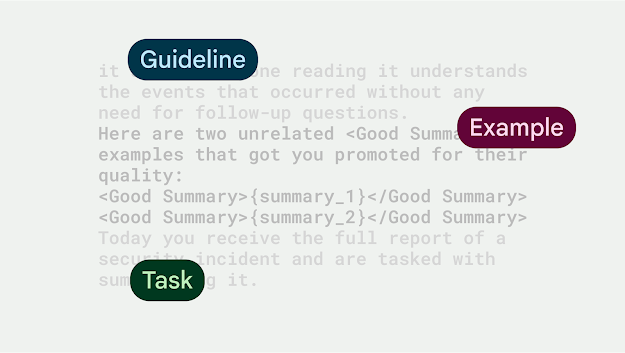

For the last immediate, we inserted 2 human-crafted abstract examples and launched a

Remaining immediate

This produced excellent summaries, within the construction we wished, with all key factors lined, and virtually with none hallucinations.

Workflow integration

In integrating the immediate into our workflow, we wished to make sure it was complementing the work of our groups, vs. solely writing communications. We designed the tooling in a approach that the UI had a ‘Generate Abstract’ button, which might pre-populate a textual content discipline with the abstract that the LLM proposed. A human person can then both settle for the abstract and have it added to the incident, do handbook modifications to the abstract and settle for it, or discard the draft and begin once more.

UI exhibiting the ‘generate draft’ button and LLM proposed abstract round a faux incident

Quantitative wins

Our newly-built software produced well-written and correct summaries, leading to 51% time saved, per incident abstract drafted by an LLM, versus a human.

Time financial savings utilizing LLM-generated summaries (pattern measurement: 300)

The one edge instances we now have seen have been round hallucinations when the enter measurement was small in relation to the immediate measurement. In these instances, the LLM made up many of the abstract and key factors have been incorrect. We mounted this programmatically: If the enter measurement is smaller than 200 tokens, we received’t name the LLM for a abstract and let the people write it.

Evolving to extra complicated use instances: Government updates

Given these outcomes, we explored different methods to use and construct upon the summarization success and apply it to extra complicated communications. We improved upon the preliminary abstract immediate and ran an experiment to draft government communications on behalf of the Incident Commander (IC). The objective of this experiment was to make sure executives and stakeholders rapidly perceive the incident details, in addition to permit ICs to relay necessary data round incidents. These communications are complicated as a result of they transcend only a abstract - they embody completely different sections (comparable to abstract, root trigger, impression, and mitigation), comply with a selected construction and format, in addition to adhere to writing finest practices (comparable to impartial tone, energetic voice as an alternative of passive voice, reduce acronyms).

This experiment confirmed that generative AI can evolve past excessive degree summarization and assist draft complicated communications. Furthermore, LLM-generated drafts, decreased time ICs spent writing government summaries by 53% of time, whereas delivering not less than on-par content material high quality when it comes to factual accuracy and adherence to writing finest practices.

What’s subsequent

We're continually exploring new methods to make use of generative AI to guard our customers extra effectively and look ahead to tapping into its potential as cyber defenders. For instance, we're exploring utilizing generative AI as an enabler of formidable reminiscence security initiatives like instructing an LLM to rewrite C++ code to memory-safe Rust, in addition to extra incremental enhancements to on a regular basis safety workflows, comparable to getting generative AI to learn design paperwork and difficulty safety suggestions primarily based on their content material.

.png)