Sustaining Strategic Interoperability and Flexibility

Within the fast-evolving panorama of generative AI, selecting the best elements in your AI answer is important. With the wide range of accessible massive language fashions (LLMs), embedding fashions, and vector databases, it’s important to navigate by means of the alternatives properly, as your determination may have vital implications downstream.

A selected embedding mannequin is perhaps too gradual in your particular software. Your system immediate method would possibly generate too many tokens, resulting in larger prices. There are various related dangers concerned, however the one that’s usually missed is obsolescence.

As extra capabilities and instruments log on, organizations are required to prioritize interoperability as they appear to leverage the newest developments within the discipline and discontinue outdated instruments. On this atmosphere, designing options that permit for seamless integration and analysis of recent elements is important for staying aggressive.

Confidence within the reliability and security of LLMs in manufacturing is one other important concern. Implementing measures to mitigate dangers resembling toxicity, safety vulnerabilities, and inappropriate responses is important for guaranteeing person belief and compliance with regulatory necessities.

Along with efficiency issues, components resembling licensing, management, and safety additionally affect one other alternative, between open supply and industrial fashions:

- Business fashions provide comfort and ease of use, significantly for fast deployment and integration

- Open supply fashions present better management and customization choices, making them preferable for delicate knowledge and specialised use circumstances

With all this in thoughts, it’s apparent why platforms like HuggingFace are extraordinarily in style amongst AI builders. They supply entry to state-of-the-art fashions, elements, datasets, and instruments for AI experimentation.

A very good instance is the strong ecosystem of open supply embedding fashions, which have gained reputation for his or her flexibility and efficiency throughout a variety of languages and duties. Leaderboards such because the Huge Textual content Embedding Leaderboard provide helpful insights into the efficiency of varied embedding fashions, serving to customers determine probably the most appropriate choices for his or her wants.

The identical could be stated in regards to the proliferation of various open supply LLMs, like Smaug and DeepSeek, and open supply vector databases, like Weaviate and Qdrant.

With such mind-boggling choice, probably the most efficient approaches to selecting the best instruments and LLMs in your group is to immerse your self within the stay atmosphere of those fashions, experiencing their capabilities firsthand to find out in the event that they align together with your aims earlier than you decide to deploying them. The mix of DataRobot and the immense library of generative AI elements at HuggingFace permits you to do exactly that.

Let’s dive in and see how one can simply arrange endpoints for fashions, discover and evaluate LLMs, and securely deploy them, all whereas enabling strong mannequin monitoring and upkeep capabilities in manufacturing.

Simplify LLM Experimentation with DataRobot and HuggingFace

Word that this can be a fast overview of the vital steps within the course of. You possibly can comply with the entire course of step-by-step in this on-demand webinar by DataRobot and HuggingFace.

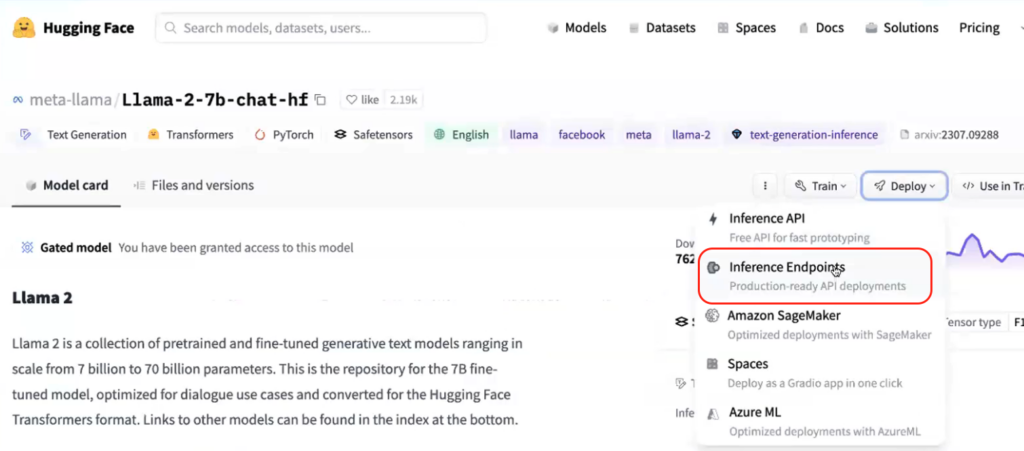

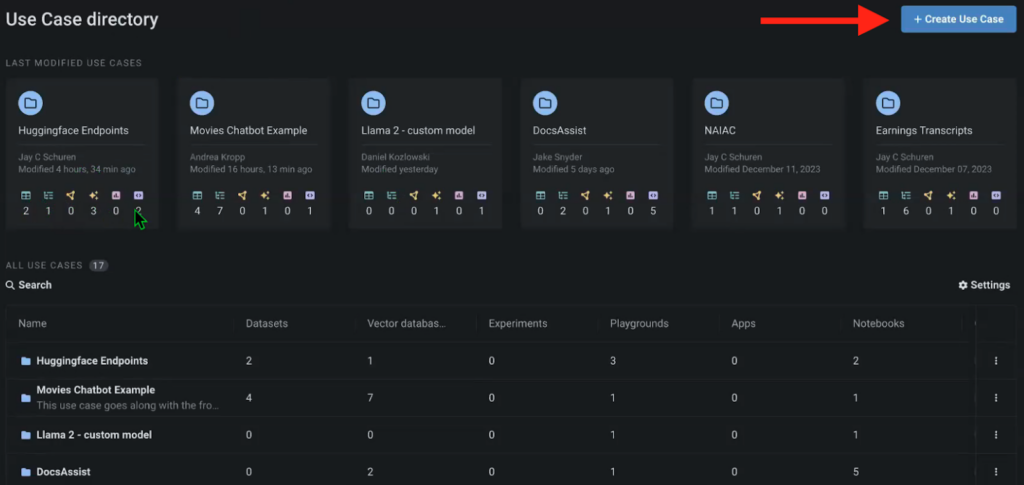

To start out, we have to create the required mannequin endpoints in HuggingFace and arrange a brand new Use Case within the DataRobot Workbench. Consider Use Instances as an atmosphere that accommodates all types of various artifacts associated to that particular challenge. From datasets and vector databases to LLM Playgrounds for mannequin comparability and associated notebooks.

On this occasion, we’ve created a use case to experiment with varied mannequin endpoints from HuggingFace.

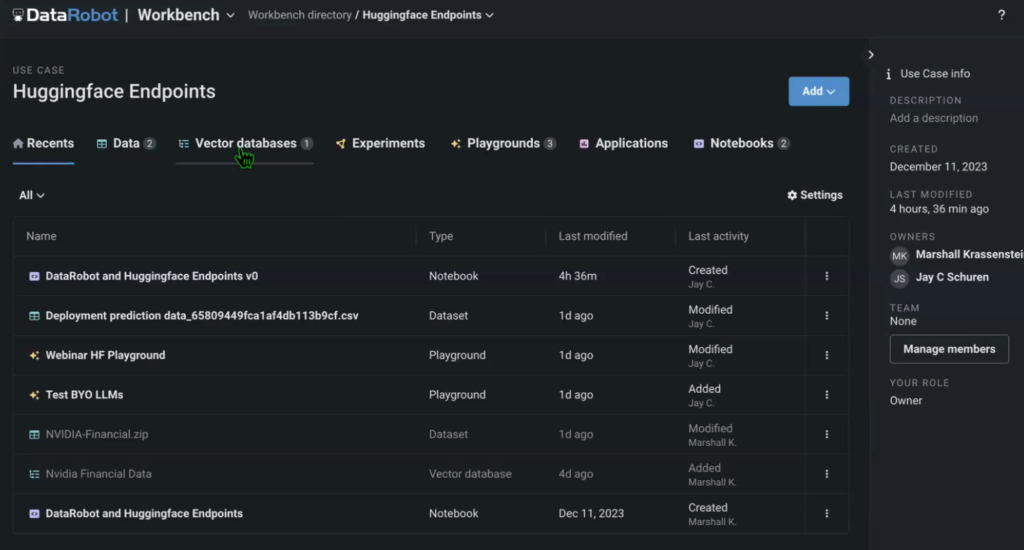

The use case additionally accommodates knowledge (on this instance, we used an NVIDIA earnings name transcript because the supply), the vector database that we created with an embedding mannequin referred to as from HuggingFace, the LLM Playground the place we’ll evaluate the fashions, in addition to the supply pocket book that runs the entire answer.

You possibly can construct the use case in a DataRobot Pocket book utilizing default code snippets out there in DataRobot and HuggingFace, as nicely by importing and modifying present Jupyter notebooks.

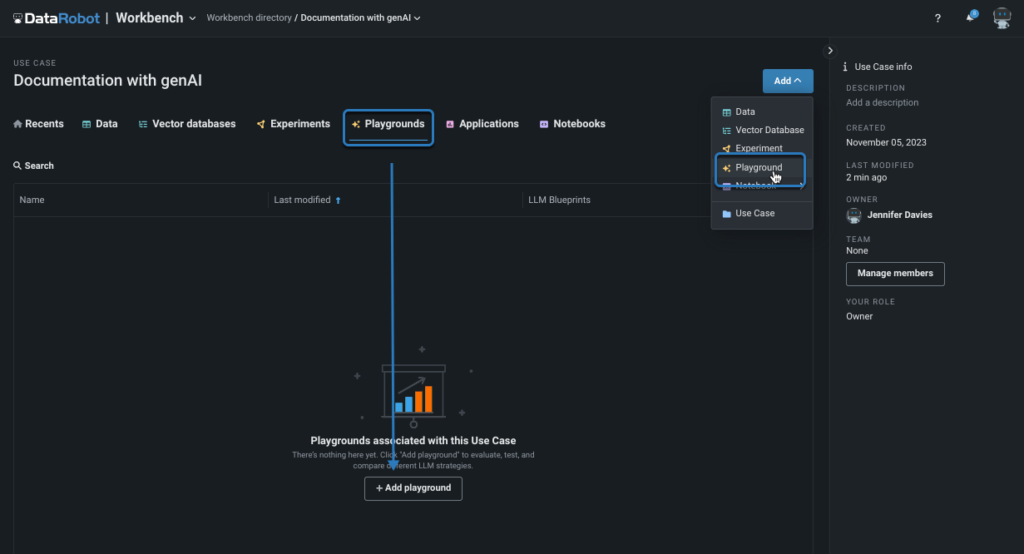

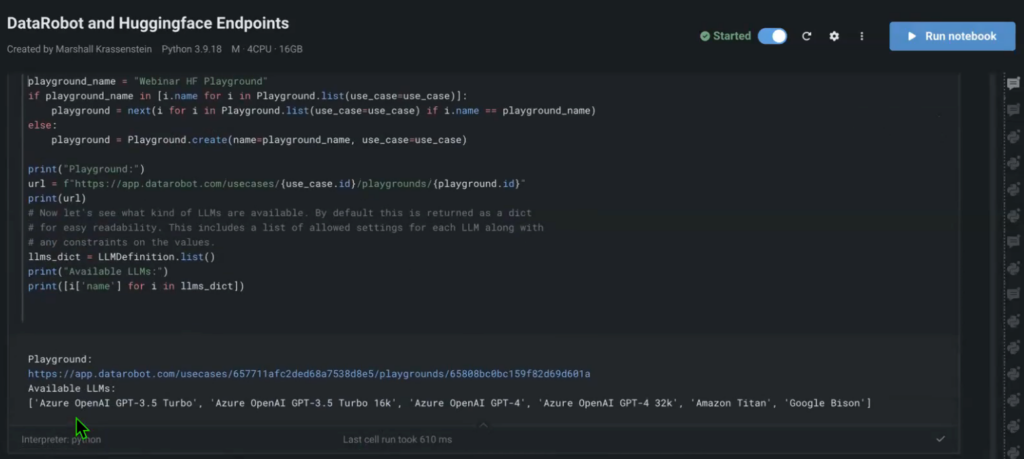

Now that you’ve got the entire supply paperwork, the vector database, the entire mannequin endpoints, it’s time to construct out the pipelines to check them within the LLM Playground.

Historically, you may carry out the comparability proper within the pocket book, with outputs displaying up within the pocket book. However this expertise is suboptimal if you wish to evaluate totally different fashions and their parameters.

The LLM Playground is a UI that permits you to run a number of fashions in parallel, question them, and obtain outputs on the similar time, whereas additionally being able to tweak the mannequin settings and additional evaluate the outcomes. One other good instance for experimentation is testing out the totally different embedding fashions, as they may alter the efficiency of the answer, primarily based on the language that’s used for prompting and outputs.

This course of obfuscates numerous the steps that you simply’d need to carry out manually within the pocket book to run such complicated mannequin comparisons. The Playground additionally comes with a number of fashions by default (Open AI GPT-4, Titan, Bison, and many others.), so you may evaluate your customized fashions and their efficiency in opposition to these benchmark fashions.

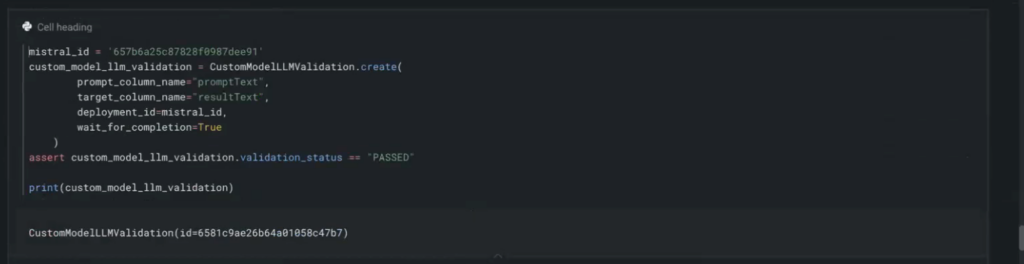

You possibly can add every HuggingFace endpoint to your pocket book with a couple of strains of code.

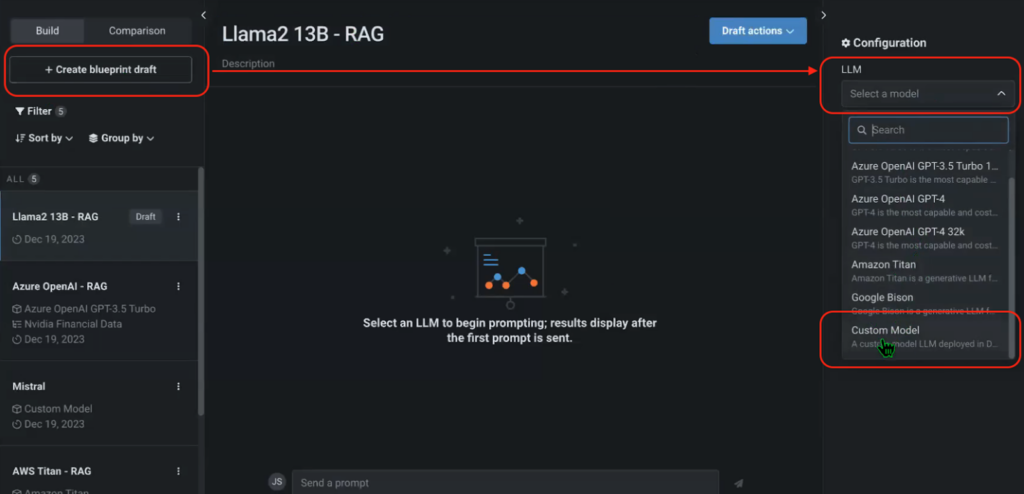

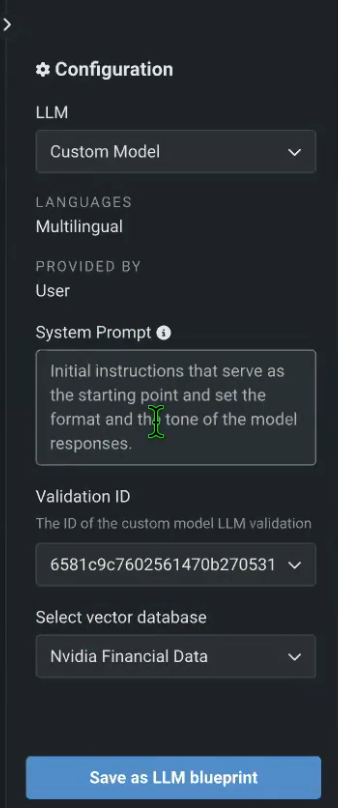

As soon as the Playground is in place and also you’ve added your HuggingFace endpoints, you possibly can return to the Playground, create a brand new blueprint, and add every certainly one of your customized HuggingFace fashions. You too can configure the System Immediate and choose the popular vector database (NVIDIA Monetary Information, on this case).

Figures 6, 7. Including and Configuring HuggingFace Endpoints in an LLM Playground

After you’ve performed this for the entire customized fashions deployed in HuggingFace, you possibly can correctly begin evaluating them.

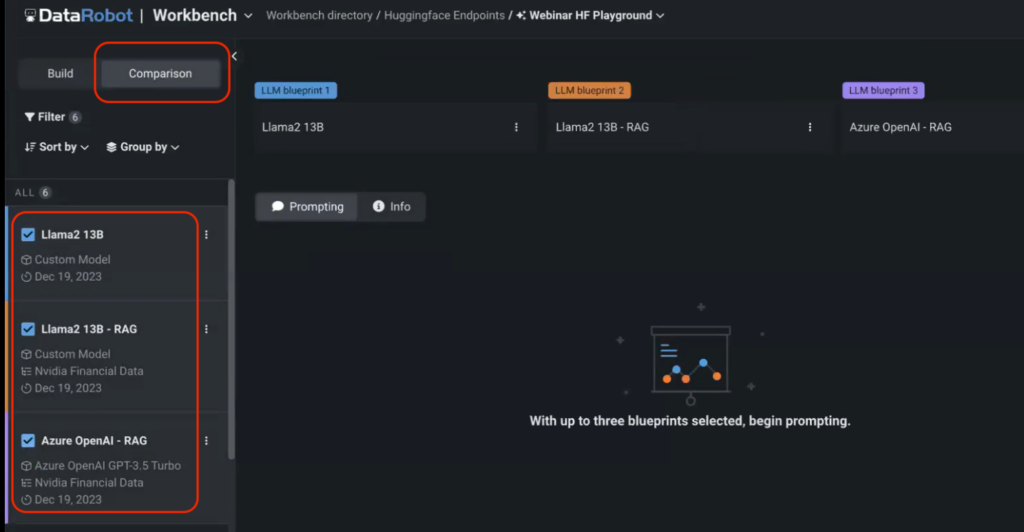

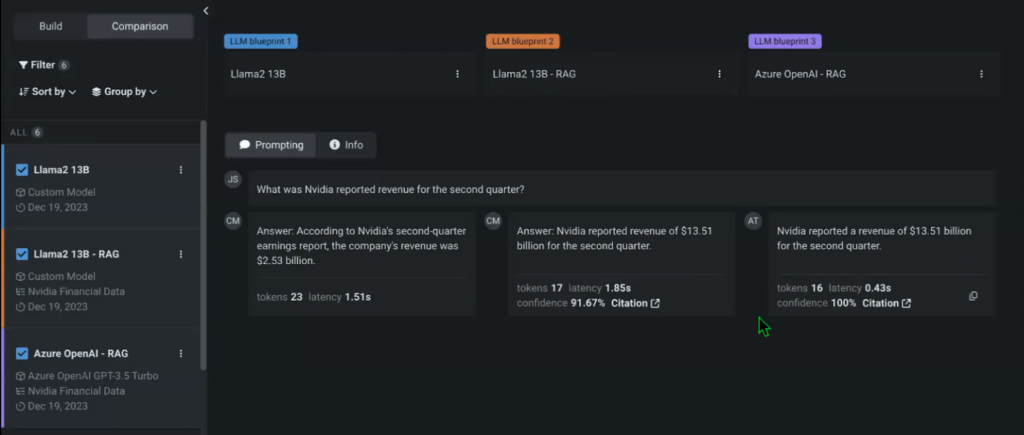

Go to the Comparability menu within the Playground and choose the fashions that you simply need to evaluate. On this case, we’re evaluating two customized fashions served through HuggingFace endpoints with a default Open AI GPT-3.5 Turbo mannequin.

Word that we didn’t specify the vector database for one of many fashions to check the mannequin’s efficiency in opposition to its RAG counterpart. You possibly can then begin prompting the fashions and evaluate their outputs in actual time.

There are tons of settings and iterations which you could add to any of your experiments utilizing the Playground, together with Temperature, most restrict of completion tokens, and extra. You possibly can instantly see that the non-RAG mannequin that doesn’t have entry to the NVIDIA Monetary knowledge vector database offers a distinct response that can also be incorrect.

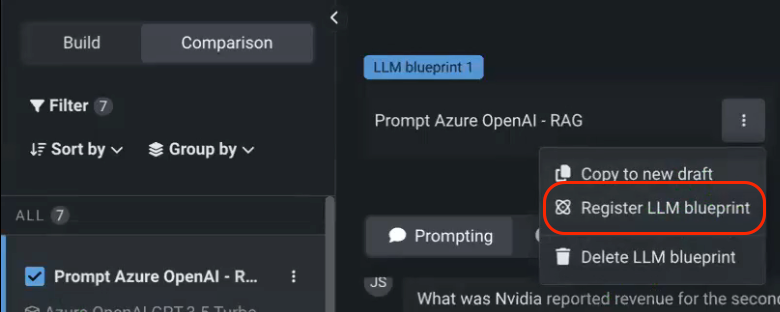

When you’re performed experimenting, you possibly can register the chosen mannequin within the AI Console, which is the hub for all your mannequin deployments.

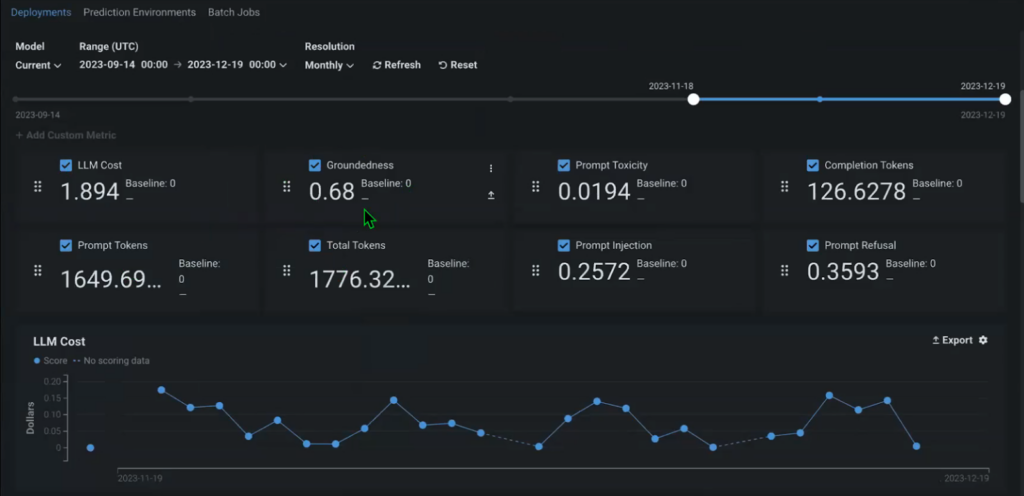

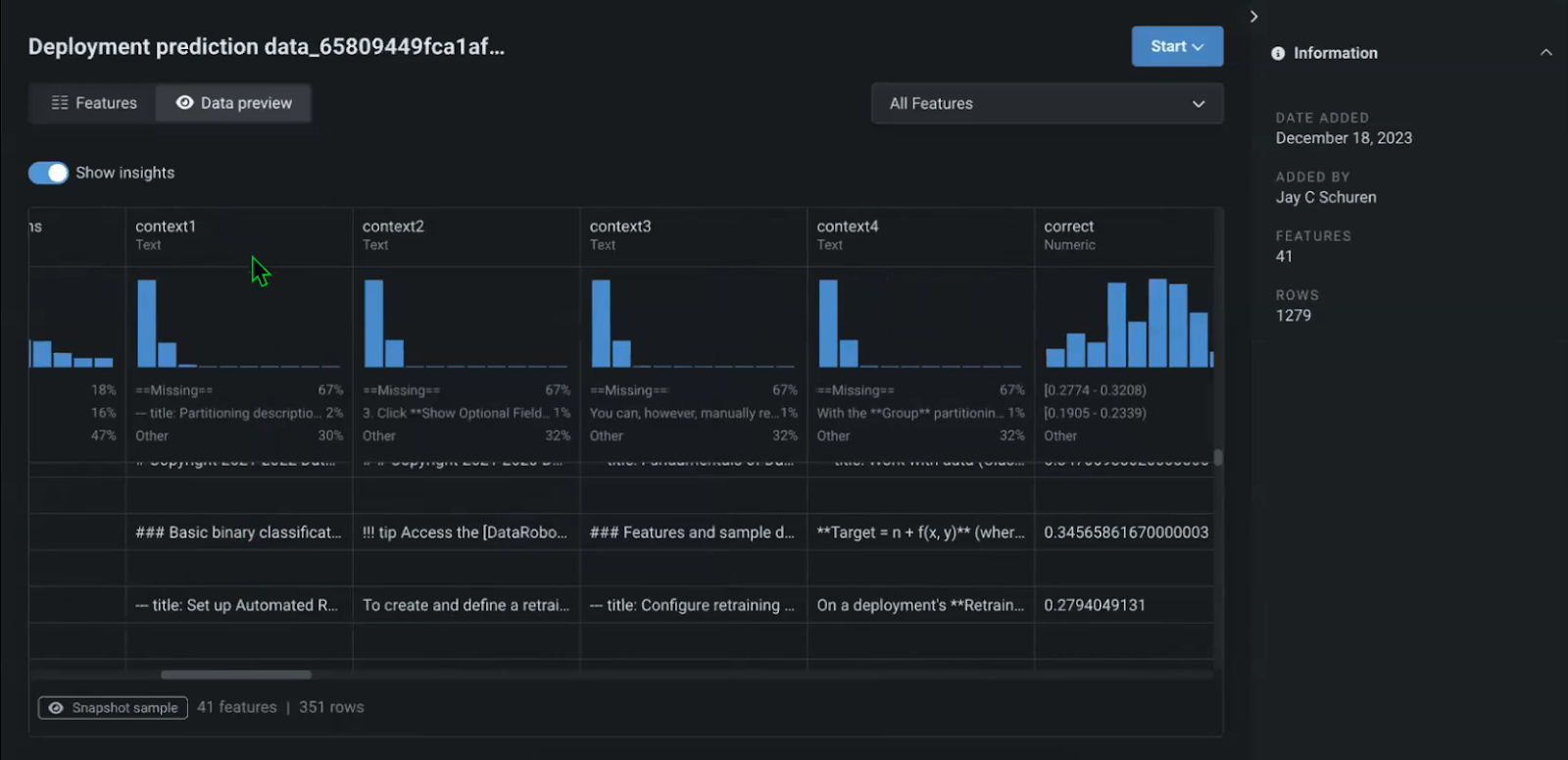

The lineage of the mannequin begins as quickly because it’s registered, monitoring when it was constructed, for which goal, and who constructed it. Instantly, throughout the Console, you may also begin monitoring out-of-the-box metrics to watch the efficiency and add customized metrics, related to your particular use case.

For instance, Groundedness is perhaps an vital long-term metric that permits you to perceive how nicely the context that you simply present (your supply paperwork) matches the mannequin (what share of your supply paperwork is used to generate the reply). This lets you perceive whether or not you’re utilizing precise / related info in your answer and replace it if crucial.

With that, you’re additionally monitoring the entire pipeline, for every query and reply, together with the context retrieved and handed on because the output of the mannequin. This additionally consists of the supply doc that every particular reply got here from.

Find out how to Select the Proper LLM for Your Use Case

General, the method of testing LLMs and determining which of them are the correct match in your use case is a multifaceted endeavor that requires cautious consideration of varied components. Quite a lot of settings could be utilized to every LLM to drastically change its efficiency.

This underscores the significance of experimentation and steady iteration that permits to make sure the robustness and excessive effectiveness of deployed options. Solely by comprehensively testing fashions in opposition to real-world situations, customers can determine potential limitations and areas for enchancment earlier than the answer is stay in manufacturing.

A sturdy framework that mixes stay interactions, backend configurations, and thorough monitoring is required to maximise the effectiveness and reliability of generative AI options, guaranteeing they ship correct and related responses to person queries.

By combining the versatile library of generative AI elements in HuggingFace with an built-in method to mannequin experimentation and deployment in DataRobot organizations can rapidly iterate and ship production-grade generative AI options prepared for the true world.

Concerning the creator

Nathaniel Daly is a Senior Product Supervisor at DataRobot specializing in AutoML and time collection merchandise. He’s centered on bringing advances in knowledge science to customers such that they will leverage this worth to unravel actual world enterprise issues. He holds a level in Arithmetic from College of California, Berkeley.