Mark Hamilton, an MIT PhD scholar in electrical engineering and laptop science and affiliate of MIT’s Laptop Science and Synthetic Intelligence Laboratory (CSAIL), needs to make use of machines to grasp how animals talk. To do this, he set out first to create a system that may be taught human language “from scratch.”

“Humorous sufficient, the important thing second of inspiration got here from the film ‘March of the Penguins.’ There’s a scene the place a penguin falls whereas crossing the ice, and lets out just a little belabored groan whereas getting up. If you watch it, it’s nearly apparent that this groan is standing in for a 4 letter phrase. This was the second the place we thought, possibly we have to use audio and video to be taught language,” says Hamilton. “Is there a method we might let an algorithm watch TV all day and from this work out what we’re speaking about?”

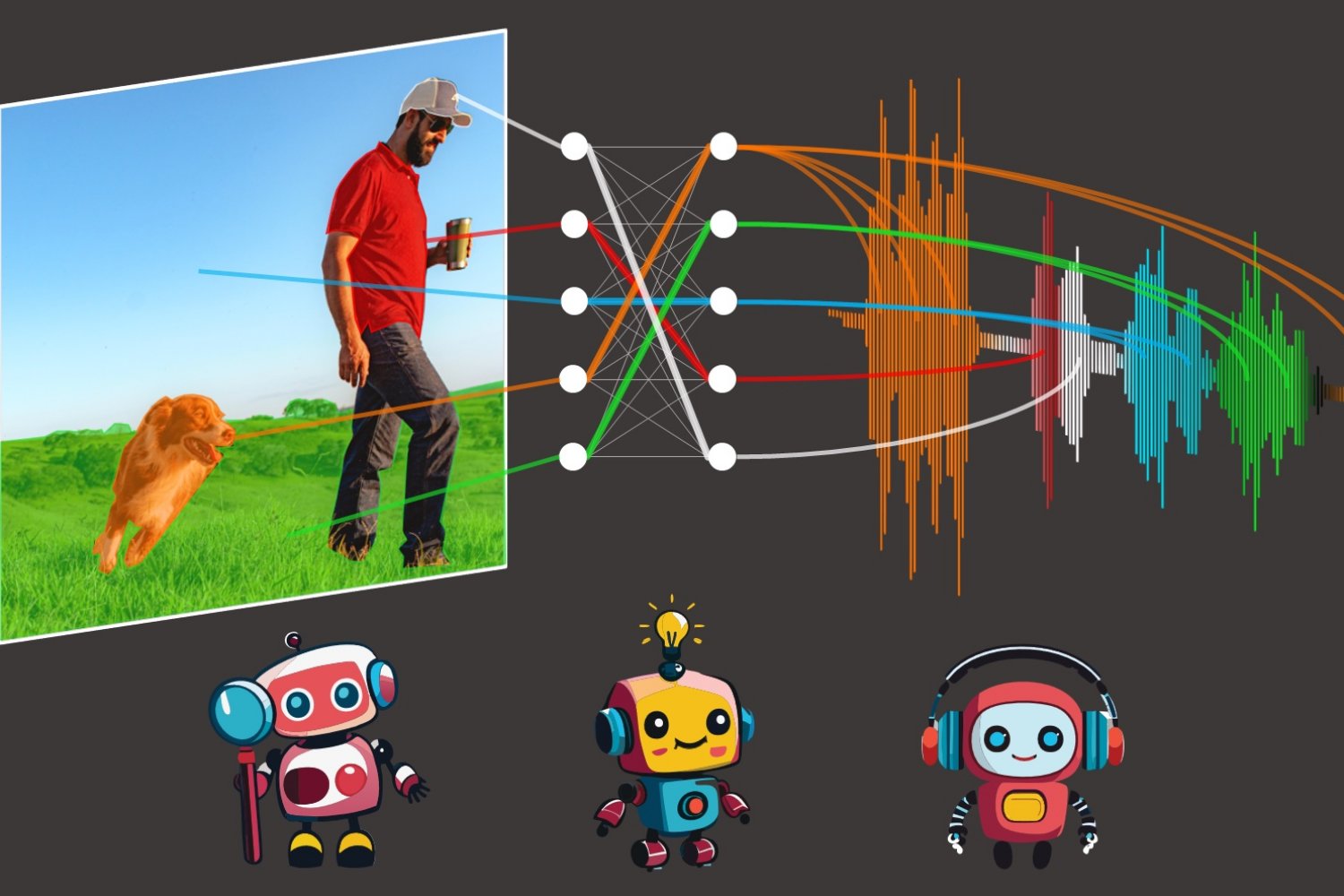

“Our mannequin, ‘DenseAV,’ goals to be taught language by predicting what it’s seeing from what it’s listening to, and vice-versa. For instance, in case you hear the sound of somebody saying ‘bake the cake at 350’ likelihood is you is perhaps seeing a cake or an oven. To succeed at this audio-video matching recreation throughout hundreds of thousands of movies, the mannequin has to be taught what persons are speaking about,” says Hamilton.

As soon as they skilled DenseAV on this matching recreation, Hamilton and his colleagues checked out which pixels the mannequin regarded for when it heard a sound. For instance, when somebody says “canine,” the algorithm instantly begins in search of canines within the video stream. By seeing which pixels are chosen by the algorithm, one can uncover what the algorithm thinks a phrase means.

Curiously, an analogous search course of occurs when DenseAV listens to a canine barking: It searches for a canine within the video stream. “This piqued our curiosity. We needed to see if the algorithm knew the distinction between the phrase ‘canine’ and a canine’s bark,” says Hamilton. The staff explored this by giving the DenseAV a “two-sided mind.” Curiously, they discovered one facet of DenseAV’s mind naturally centered on language, just like the phrase “canine,” and the opposite facet centered on seems like barking. This confirmed that DenseAV not solely discovered the that means of phrases and the places of sounds, but in addition discovered to tell apart between some of these cross-modal connections, all with out human intervention or any information of written language.

One department of purposes is studying from the huge quantity of video printed to the web every day: “We wish techniques that may be taught from huge quantities of video content material, comparable to tutorial movies,” says Hamilton. “One other thrilling software is knowing new languages, like dolphin or whale communication, which don’t have a written type of communication. Our hope is that DenseAV might help us perceive these languages which have evaded human translation efforts because the starting. Lastly, we hope that this technique can be utilized to find patterns between different pairs of alerts, just like the seismic sounds the earth makes and its geology.”

A formidable problem lay forward of the staff: studying language with none textual content enter. Their goal was to rediscover the that means of language from a clean slate, avoiding utilizing pre-trained language fashions. This method is impressed by how youngsters be taught by observing and listening to their setting to grasp language.

To realize this feat, DenseAV makes use of two principal elements to course of audio and visible information individually. This separation made it inconceivable for the algorithm to cheat, by letting the visible facet take a look at the audio and vice versa. It compelled the algorithm to acknowledge objects and created detailed and significant options for each audio and visible alerts. DenseAV learns by evaluating pairs of audio and visible alerts to seek out which alerts match and which alerts don’t. This technique, referred to as contrastive studying, doesn’t require labeled examples, and permits DenseAV to determine the essential predictive patterns of language itself.

One main distinction between DenseAV and former algorithms is that prior works centered on a single notion of similarity between sound and pictures. A whole audio clip like somebody saying “the canine sat on the grass” was matched to a whole picture of a canine. This didn’t enable earlier strategies to find fine-grained particulars, just like the connection between the phrase “grass” and the grass beneath the canine. The staff’s algorithm searches for and aggregates all of the attainable matches between an audio clip and a picture’s pixels. This not solely improved efficiency, however allowed the staff to exactly localize sounds in a method that earlier algorithms couldn’t. “Standard strategies use a single class token, however our method compares each pixel and each second of sound. This fine-grained technique lets DenseAV make extra detailed connections for higher localization,” says Hamilton.

The researchers skilled DenseAV on AudioSet, which incorporates 2 million YouTube movies. In addition they created new datasets to check how nicely the mannequin can hyperlink sounds and pictures. In these exams, DenseAV outperformed different prime fashions in duties like figuring out objects from their names and sounds, proving its effectiveness. “Earlier datasets solely supported coarse evaluations, so we created a dataset utilizing semantic segmentation datasets. This helps with pixel-perfect annotations for exact analysis of our mannequin’s efficiency. We are able to immediate the algorithm with particular sounds or photographs and get these detailed localizations,” says Hamilton.

Because of the huge quantity of information concerned, the venture took a few yr to finish. The staff says that transitioning to a big transformer structure introduced challenges, as these fashions can simply overlook fine-grained particulars. Encouraging the mannequin to concentrate on these particulars was a major hurdle.

Trying forward, the staff goals to create techniques that may be taught from huge quantities of video- or audio-only information. That is essential for brand spanking new domains the place there’s a number of both mode, however not collectively. In addition they intention to scale this up utilizing bigger backbones and probably combine information from language fashions to enhance efficiency.

“Recognizing and segmenting visible objects in photographs, in addition to environmental sounds and spoken phrases in audio recordings, are every troublesome issues in their very own proper. Traditionally researchers have relied upon costly, human-provided annotations to be able to practice machine studying fashions to perform these duties,” says David Harwath, assistant professor in laptop science on the College of Texas at Austin who was not concerned within the work. “DenseAV makes important progress in direction of creating strategies that may be taught to unravel these duties concurrently by merely observing the world by way of sight and sound — primarily based on the perception that the issues we see and work together with typically make sound, and we additionally use spoken language to speak about them. This mannequin additionally makes no assumptions in regards to the particular language that’s being spoken, and will due to this fact in precept be taught from information in any language. It could be thrilling to see what DenseAV might be taught by scaling it as much as hundreds or hundreds of thousands of hours of video information throughout a large number of languages.”

Further authors on a paper describing the work are Andrew Zisserman, professor of laptop imaginative and prescient engineering on the College of Oxford; John R. Hershey, Google AI Notion researcher; and William T. Freeman, MIT electrical engineering and laptop science professor and CSAIL principal investigator. Their analysis was supported, partially, by the U.S. Nationwide Science Basis, a Royal Society Analysis Professorship, and an EPSRC Programme Grant Visible AI. This work will likely be introduced on the IEEE/CVF Laptop Imaginative and prescient and Sample Recognition Convention this month.