You’ve doubtless heard {that a} image is value a thousand phrases, however can a big language mannequin (LLM) get the image if it’s by no means seen photos earlier than?

Because it seems, language fashions which are educated purely on textual content have a strong understanding of the visible world. They’ll write image-rendering code to generate complicated scenes with intriguing objects and compositions — and even when that data just isn’t used correctly, LLMs can refine their photos. Researchers from MIT’s Pc Science and Synthetic Intelligence Laboratory (CSAIL) noticed this when prompting language fashions to self-correct their code for various photos, the place the techniques improved on their easy clipart drawings with every question.

The visible data of those language fashions is gained from how ideas like shapes and colours are described throughout the web, whether or not in language or code. When given a course like “draw a parrot within the jungle,” customers jog the LLM to think about what it’s learn in descriptions earlier than. To evaluate how a lot visible data LLMs have, the CSAIL workforce constructed a “imaginative and prescient checkup” for LLMs: utilizing their “Visible Aptitude Dataset,” they examined the fashions’ talents to attract, acknowledge, and self-correct these ideas. Accumulating every closing draft of those illustrations, the researchers educated a pc imaginative and prescient system that identifies the content material of actual pictures.

“We primarily prepare a imaginative and prescient system with out straight utilizing any visible information,” says Tamar Rott Shaham, co-lead writer of the examine and an MIT electrical engineering and pc science (EECS) postdoc at CSAIL. “Our workforce queried language fashions to jot down image-rendering codes to generate information for us after which educated the imaginative and prescient system to judge pure photos. We had been impressed by the query of how visible ideas are represented by way of different mediums, like textual content. To specific their visible data, LLMs can use code as a typical floor between textual content and imaginative and prescient.”

To construct this dataset, the researchers first queried the fashions to generate code for various shapes, objects, and scenes. Then, they compiled that code to render easy digital illustrations, like a row of bicycles, displaying that LLMs perceive spatial relations nicely sufficient to attract the two-wheelers in a horizontal row. As one other instance, the mannequin generated a car-shaped cake, combining two random ideas. The language mannequin additionally produced a glowing gentle bulb, indicating its capability to create visible results.

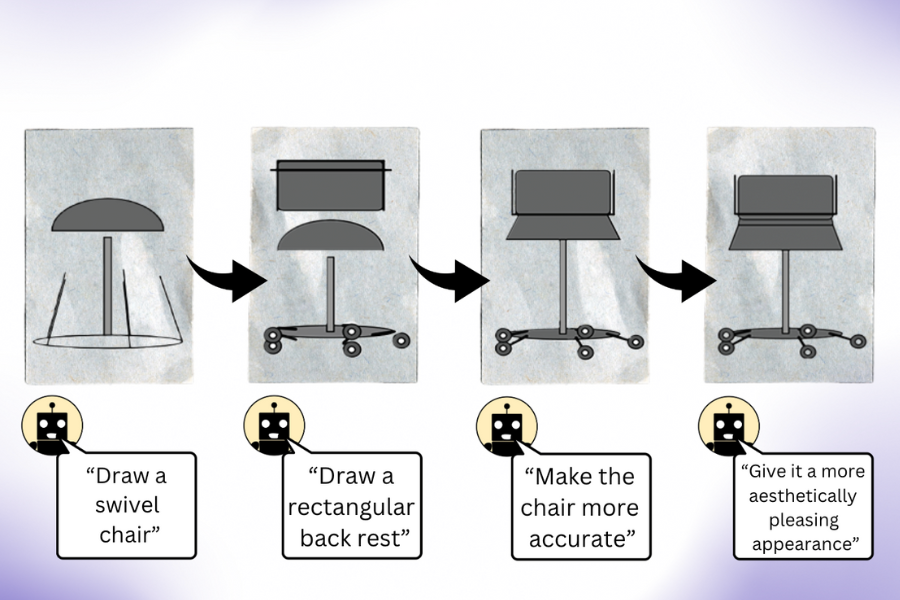

“Our work exhibits that once you question an LLM (with out multimodal pre-training) to create a picture, it is aware of way more than it appears,” says co-lead writer, EECS PhD scholar, and CSAIL member Pratyusha Sharma. “Let’s say you requested it to attract a chair. The mannequin is aware of different issues about this piece of furnishings that it might not have instantly rendered, so customers can question the mannequin to enhance the visible it produces with every iteration. Surprisingly, the mannequin can iteratively enrich the drawing by bettering the rendering code to a major extent.”

The researchers gathered these illustrations, which had been then used to coach a pc imaginative and prescient system that may acknowledge objects inside actual pictures (regardless of by no means having seen one earlier than). With this artificial, text-generated information as its solely reference level, the system outperforms different procedurally generated picture datasets that had been educated with genuine pictures.

The CSAIL workforce believes that combining the hidden visible data of LLMs with the inventive capabilities of different AI instruments like diffusion fashions may be useful. Techniques like Midjourney typically lack the know-how to persistently tweak the finer particulars in a picture, making it tough for them to deal with requests like lowering what number of automobiles are pictured, or inserting an object behind one other. If an LLM sketched out the requested change for the diffusion mannequin beforehand, the ensuing edit might be extra passable.

The irony, as Rott Shaham and Sharma acknowledge, is that LLMs typically fail to acknowledge the identical ideas that they will draw. This turned clear when the fashions incorrectly recognized human re-creations of photos throughout the dataset. Such numerous representations of the visible world doubtless triggered the language fashions’ misconceptions.

Whereas the fashions struggled to understand these summary depictions, they demonstrated the creativity to attract the identical ideas otherwise every time. When the researchers queried LLMs to attract ideas like strawberries and arcades a number of instances, they produced footage from numerous angles with various shapes and colours, hinting that the fashions may need precise psychological imagery of visible ideas (somewhat than reciting examples they noticed earlier than).

The CSAIL workforce believes this process might be a baseline for evaluating how nicely a generative AI mannequin can prepare a pc imaginative and prescient system. Moreover, the researchers look to broaden the duties they problem language fashions on. As for his or her latest examine, the MIT group notes that they don’t have entry to the coaching set of the LLMs they used, making it difficult to additional examine the origin of their visible data. Sooner or later, they intend to discover coaching a good higher imaginative and prescient mannequin by letting the LLM work straight with it.

Sharma and Rott Shaham are joined on the paper by former CSAIL affiliate Stephanie Fu ’22, MNG ’23 and EECS PhD college students Manel Baradad, Adrián Rodríguez-Muñoz ’22, and Shivam Duggal, who’re all CSAIL associates; in addition to MIT Affiliate Professor Phillip Isola and Professor Antonio Torralba. Their work was supported, partly, by a grant from the MIT-IBM Watson AI Lab, a LaCaixa Fellowship, the Zuckerman STEM Management Program, and the Viterbi Fellowship. They current their paper this week on the IEEE/CVF Pc Imaginative and prescient and Sample Recognition Convention.